Building Defensible AI: Why Explainability Matters in Legal Technology

As artificial intelligence becomes increasingly integrated into legal technology solutions, a fundamental question emerges: How can we ensure that AI decisions meet the rigorous standards required for legal proceedings? The answer lies in explainable AI (XAI), a discipline that makes AI systems transparent, interpretable, and defensible in court. For organizations serving law enforcement, prosecutors, and regulated investigative teams, explainability isn't optional—it's essential for building trust, ensuring accountability, and maintaining legal admissibility.

The Legal Imperative for Explainability

Legal proceedings operate under principles that require transparency and accountability. When evidence, analysis, or conclusions form the basis of legal decisions—whether in criminal investigations, civil litigation, or regulatory enforcement—the parties involved must be able to understand and challenge those findings. Traditional expert testimony relies on professionals who can explain their methodologies, reasoning, and conclusions. AI systems that operate as "black boxes," producing results without explainable processes, fundamentally conflict with these legal requirements.

This conflict becomes particularly acute in criminal proceedings, where defendants have constitutional rights to confront evidence against them. The Sixth Amendment's Confrontation Clause, combined with due process requirements, means that AI-generated evidence must be subject to meaningful examination and challenge. Unexplainable AI systems create insurmountable barriers to these fundamental rights.

Regulatory Frameworks Mandating Explainability

The legal technology landscape is evolving rapidly, with new regulations explicitly requiring explainability in AI systems used for legal purposes:

European Union AI Act (2025)

The EU AI Act, fully implemented in 2025, establishes a comprehensive regulatory framework that classifies AI systems based on risk levels. High-risk applications, including those used in legal settings, face stringent transparency and explainability requirements. These mandates ensure that AI systems used in legal contexts are transparent, explainable, and auditable to uphold fundamental rights and ensure fair outcomes.

Colorado AI Act (2024)

Enacted in May 2024, the Colorado AI Act represents one of the first comprehensive state-level AI regulations in the United States. It regulates high-risk AI systems, including those used in legal services, requiring developers and deployers to prevent algorithmic discrimination and ensure that AI systems do not unlawfully impact individuals based on protected characteristics. The act implicitly requires explainability to demonstrate compliance with these anti-discrimination requirements.

Transparency in Frontier Artificial Intelligence Act (California, 2025)

California's transparency legislation mandates increased transparency for companies developing AI systems, focusing on assessing and mitigating potential catastrophic risks. While primarily targeting frontier AI development, the principles of transparency and documentation it establishes have relevance for all legal technology applications, particularly those involving high-stakes decisions.

Proposed Federal Rule of Evidence 707

The Federal Judicial Conference's Advisory Committee on Evidence Rules has proposed Rule 707, which would apply expert testimony standards to AI-generated evidence. This proposal underscores the necessity for AI systems to demonstrate reliability through testability, error rates, and peer review—all of which require some degree of explainability to validate.

The Judicial Duty to State Reasons

In judicial decision-making, judges have a duty to state the reasons for their decisions. As AI tools become integrated into judicial processes, this duty extends to requiring that AI-assisted decisions include both pragmatic and technical explanations. This ensures that decisions remain transparent and justifiable, maintaining public trust in the legal system.

Studies on public perception of AI in judicial decision-making indicate that trust is directly influenced by the perceived fairness and transparency of AI tools. When citizens cannot understand how AI systems contribute to legal decisions that affect their lives, trust in the justice system erodes. Explainability serves not only as a legal requirement but as a fundamental component of maintaining legitimacy and public confidence.

Core Principles of Explainable AI for Legal Technology

Building defensible AI for legal applications requires adherence to several core principles:

Interpretability Across Stakeholder Levels

Legal technology must provide explanations that are comprehensible to different audiences. Technical explanations may be necessary for forensic experts, while judges and juries require explanations in accessible language that connects AI findings to legal standards and reasoning. A robust explainable AI system provides layered explanations, from high-level summaries to detailed technical documentation.

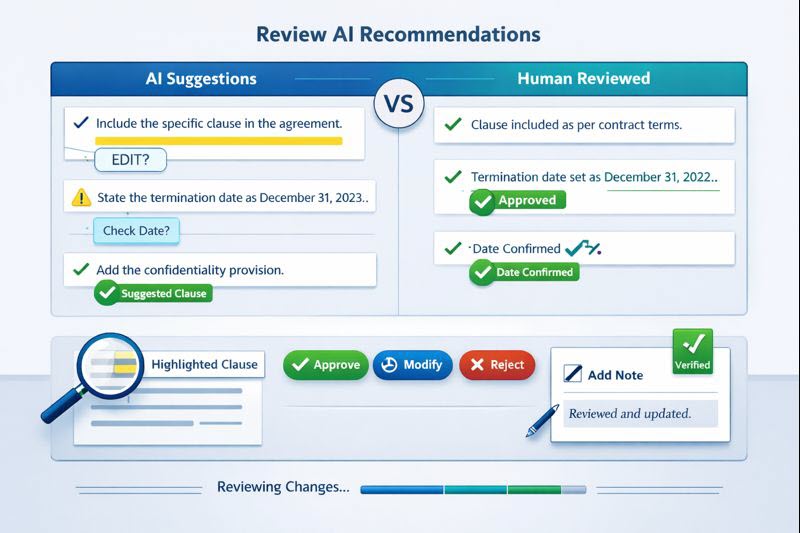

Contestability and Challenge Mechanisms

Explainability alone is insufficient if stakeholders cannot meaningfully challenge AI conclusions. Legal AI systems must incorporate contestability mechanisms that allow parties to question assumptions, test alternative interpretations, and present counterarguments. This requires not only transparency in how conclusions were reached, but also access to the underlying data, model characteristics, and processing steps that contributed to those conclusions.

Auditability and Reproducibility

Legal proceedings often require that evidence and analysis be auditable and reproducible. Explainable AI systems must provide comprehensive audit trails that document every step of the analysis process, allowing independent experts to review and validate the methodology. This includes detailed logs of data inputs, model configurations, processing steps, and output generation.

Error Transparency and Uncertainty Quantification

Perfect accuracy is unattainable for any analytical system. Explainable AI systems must transparently communicate uncertainty, confidence levels, and potential error sources. This allows legal professionals to appropriately weigh AI findings against other evidence and make informed decisions about how much reliance to place on AI-generated conclusions.

Technical Approaches to Explainability

Modern XAI techniques provide various methods for achieving explainability in legal AI systems:

Model-Agnostic Explanations

Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) can provide explanations for any machine learning model, regardless of its underlying architecture. These methods generate explanations by analyzing how different input features contribute to specific predictions, making them valuable for explaining individual case analyses.

Feature Importance Analysis

Understanding which features or evidence elements most influenced an AI conclusion is crucial for legal defensibility. Feature importance techniques identify and rank the factors that drove specific decisions, allowing legal professionals to understand which evidence was most significant and why.

Counterfactual Explanations

Counterfactual explanations show how changing specific inputs would have altered the AI's conclusions. These explanations are particularly valuable in legal contexts because they help explain not just what the AI decided, but what factors were decisive. For example, a counterfactual explanation might show that removing a specific piece of evidence would have changed the AI's assessment, highlighting that evidence's critical importance.

Attention Mechanisms and Visualizations

For complex AI models processing visual or textual evidence, attention mechanisms can highlight which portions of the evidence the model focused on most. Visual heatmaps and attention overlays make these explanations immediately comprehensible, helping investigators and legal professionals understand what the AI "saw" in the evidence.

Building Explainability into Legal AI Systems

Organizations developing AI for legal applications must integrate explainability from the earliest stages of system design:

Requirements Engineering

Explainability requirements must be specified alongside functional requirements. This includes defining what level of explanation is needed, who needs to understand the explanations, and what format explanations should take for different use cases.

Model Selection and Architecture

Some AI architectures are inherently more explainable than others. While complex deep learning models may offer superior accuracy, simpler models like decision trees or rule-based systems may provide better explainability. The choice between model complexity and explainability depends on the specific legal application and the acceptable trade-offs.

Explanation Generation Infrastructure

Building explainability requires dedicated infrastructure for generating, storing, and presenting explanations. This includes explanation generation engines, storage systems for explanation artifacts, and user interfaces that present explanations in accessible formats.

Validation and Testing Frameworks

Explainability claims must be validated through rigorous testing. This includes testing whether explanations accurately reflect the model's behavior, whether explanations are comprehensible to intended audiences, and whether explanations support the legal use case requirements.

ClearPath.AI's Approach to Defensible AI

At ClearPath.AI, explainability isn't an afterthought—it's a foundational principle embedded throughout our platform. Our approach ensures that every AI decision can be understood, validated, and defended in legal proceedings.

Comprehensive Audit Trails

Every analysis performed by our AI systems generates detailed audit trails documenting all inputs, processing steps, model configurations, and outputs. These trails are designed to be both human-readable and machine-verifiable, supporting independent review and validation.

Layered Explanation Architecture

Our platform provides explanations at multiple levels of detail. High-level summaries explain findings in accessible language suitable for legal briefs and court presentations. Detailed technical documentation provides the depth required for expert testimony and adversarial examination.

Interactive Explanation Interfaces

We've developed interactive interfaces that allow users to explore AI reasoning processes. Investigators can drill down into specific findings, view feature importance analyses, examine attention patterns, and test counterfactual scenarios—all within a user-friendly interface designed for legal professionals.

Continuous Validation and Improvement

Our explainability capabilities undergo continuous validation through testing with legal experts, review of court proceedings where our technology has been presented, and iterative improvement based on feedback from prosecutors, defense attorneys, and judges.

The Future of Explainable AI in Legal Technology

As AI technology continues to advance, explainability techniques will evolve as well. Emerging research in areas like causal reasoning, symbolic AI, and hybrid neuro-symbolic systems promises to deliver AI that is both more capable and more explainable.

However, technical explainability alone is insufficient. The legal technology industry must also develop standards, best practices, and professional guidelines for explainability in legal AI systems. This includes defining what constitutes adequate explanation for different legal contexts, establishing validation methodologies, and creating training programs for legal professionals to effectively evaluate and present AI explanations.

The organizations that succeed in this space will be those that recognize explainability not as a compliance burden, but as a strategic differentiator that builds trust, ensures legal defensibility, and ultimately delivers better outcomes for the justice system.

Conclusion

Explainability in legal AI is not negotiable—it's a fundamental requirement for systems that operate in contexts where lives, liberty, and justice are at stake. As regulations evolve and judicial expectations clarify, organizations serving legal markets must prioritize explainability throughout the entire AI development lifecycle. By building defensible, explainable AI systems, we can harness the power of artificial intelligence to enhance legal processes while maintaining the transparency, accountability, and fairness that define our justice system.